Military Lets New IT Recruits Skip Boot Camp At this moment in time the cyber security war is waging on from behind a desktop display at all hours of our lives. Due to the drastic increase of cyber threats, the military has been stumbling around to find a better footing in the war on cybercrime. THe most recent initiative that has been mentioned is the possibility of letting recruits who are meant to continue on to the information technology field join the military without having to go through the intensive boot camp training which is required for every military recruit. Typically, every new recruit, regardless of occupation, is required to go through a six month boot camp to put the individual at their peak physical condition before going to a six month technical school before working for the United States military. Much like the rest of the world, the US military is having a difficult time filling positions in the cyber security department. Right now everyone is rushing and competing to fill up more and more cyber security positions, not just in the military ,but also in privately owned cyber security companies as well. Just recently the US Cyber Command told Congress that in order to make its 2018 deadline to reach full operational capacity, they may have to make compromises with the training regime. Most people who do have the IT capabilities that the military is looking for are not particularly interested in the five to ten year development cycles that they currently offer. Many already existing conditions of joining and being in the military typically turns away many individuals who are considered “cyber warfare-ready” so by letting these people skip bootcamp training and by assigning rank and pay-grades based off of the individual’s skill level. Hopefully this will help convince more IT specialists to join the military ,but even with the new deal that these highly sought-after people are being offered, the US military may still be losing opportunities to the private companies that pay higher salaries. It is very difficult to make the restricting and official atmosphere of a US military IT job look appealing to many techies who are more often recruited by other more glamorous private companies, but hopefully these new adjustments will help them make their 2018 deadline.

3 Comments

The Future of Cyber Security Employment It is simple to see that the humans are the weakest link in cyber security considering that ninety percent of cyber security incidents having to do with human error, it is no wonder that technology companies have been looking to find a more efficient ways of guarding our information. Not only are humans slightly inefficient with creating secure cyber security methods ,but there is also a huge expected shortage of 1.5 million professionals that are needed to fill current and future positions at these companies. We already use cognitive and artificial intelligence technology to help consumers filter spam and spot phishing websites so it only makes sense to just take it a step further and actually implement artificial intelligence to cyber security jobs. It is not only inefficient to keep humans in their current position at cyber security companies as coding monkeys, but it is also very cost effective. On average the security operation center handles over a hundreds of thousands of security risks in total with only about two hundred thousand of the security events being considered of importance per day. Cyber crime costs the world economy four hundred and forty-five billion each year with future crime expectancy only increasing in future years due to the drastic loss of experienced professionals.

Some people may argue that artificial intelligence will end up destroying many jobs, but in fact, it’s presence in the cyber security world is predicted to multiply the number of available positions for hire. These new collar jobs wouldn’t even require the individual to have a four year degree in computer science to have an influence within the security operations center. The prime type of candidate for this new line of jobs could be very broad. The companies would only be looking for individuals who have strong ethics, curiosity, problem-solving skills, and of course risk analysis. All of the other requirements for the job could be taught on site, community college, or even vocational skill programs. Once the company has motivated individuals, they would then only need to access the artificial intelligence system. They would use it to investigate malware, viruses, or other risks and use that information to combat the issue. The artificial intelligence would basically act as an assistant to the employee and speed up the cyber security process. Perdix A giant swarm of drones all working together to achieve a single goal or complete a single mission may seem like a part of a plot of a sci-fi movie ,but it is ,in fact, a reality today. On January 8th the Naval Air Weapons Station China Lake tested out the perdix by using three F/A-18 Super Hornet fighters which let go of one hundred and three mini drones at Mach 0.6 speed. Perdix is essentially a swarm of artificially intelligent drones which can make decisions based off of the data that each individual drone collects. Even if one or a few drones were to crash then the swarm itself is smart enough to be able to regroup and adjust based off of the loss. Also, the perdix drones are made out off cheap and easy to replace 3-D printed components which are capable of picking up and processing information faster than the human brain. It was originally developed by students from the Massachusetts Institute of Technology after the inspiration of commercial smartphones for a $20 million dollar Pentagon investment. The fact that each unit is fairly easy and cheap to make and that the program has very favorable feedback from the testing teams. Perdix is, in fact, one of the most feasible and closest ongoing options we have to AI technology able to be used in action with the government. None of the drones need to be controlled by humans in any way and do not need exact orders to perform a mission, the units as a whole only need a general order to complete a task. From that point on the swarm can figure out all of the specifics on its own.

Perix can be used is many real life situations such as search missions, decoys, and with design changes even used in lethal situations. Using the Perdix system as a decoy against enemy troops can be very effective and not that much more expensive. The user would only need to equip the drone with electronic transmitters in order to jam enemy radars and cause confusion on the enemy side. With the broad amount of options that Perdix could be used for, it’s a little overwhelming or frightening to think about. Altering the design on a perdix drone to become a possibly lethal mechanism seems to be very simple for an engineer ,but luckily laws and morals are in the way of the production of this possibility for the time being. A Big DogLife downrange during a war can be very stressful and dangerous. Everyday soldiers risk their lives during operations in war zones. In very dangerous zones there is not always a guarantee that a troop may even come back alive. Humans may be put at risk to death around bullets and weapons, but robots can’t die, at least not like humans can. Which is why Boston Dynamics, a company owned by Google, developed the Big Dog which is an artificially intelligent military pack mule. This 160 pound quadruped robotics a highly mobile system of sensors that can detect enemy units in the immediate area. Not only that but it can also carry up to four hundred pounds of equipment while only using electric power. Depending on the design, Big Dog also has the possibility to extract troops depending on their level of injury.

The Big Dog can scale a multitude of terrains including stairs and other indoor terrains as well as natural environments without losing balance or colliding with others. Even when receiving a kick from a full grown human, the robot dog can recalibrate its stability and save itself from falling over. They trained/ are training these machines to recognise each other by having them bump into each other and be able to learn from these encounters and improve it’s mobility. Big Dog needs little to no help when it comes to movement and staying up ,but his mobility does not only stop at being capable. The walk cycle of these machines are very life-like and similar to actual dogs and when you include the emergent collective behaviour, the outcome can be very unpredictable. It’s possible to use Big Dogs technology and programming to function much like the Perdix swarm drones, defensive mechanisms, and possibly even end up as responsive as a human. Unfortunately, the Big Dog has since been abandoned in 2015 by the military for the time being due to the fact that the pack mule is far too noisy to use in action. Though it’s functions and capabilities hold a lot of potential, the Big Dog threatens the safety of the troops position due to the noise issues. Though the technology may still be too premature for possible use in war, it has a promising aptitude for use in the future. Online Freedom of Speech Since the creation of social media on the web there have been many positive and negative impacts on societies who use the web. Many people are aware that not everyone is true to who they are when logging in online. Often times, studies show that some people feel more safe communicating with others through the web as opposed to meeting in real life. This can be comforting or even empowering for some people, but it can also lead people to feel the safety of creating negative interactions as well as positive ones. Many people, especially in online games and social media chats are well aware of the dangers of internet trolls, cyber bullies, and people who are just full out appalling. These unfavorable interactions are considered common in today’s cyber world and have ultimately lead to misinformation, extreme emotional distress, and even suicide in the worst cases. In the online world it is not hard to find people who feel safer speaking from behind the impersonal screen of a computer and use that sense of security to make themselves feel bigger by harassing individuals online, sometimes even to the point of beckoning them to kill themselves in real life. Last Summer, Pew and Elon university began researching the predicted outcome of online negativity and ended up surveying 1,500 individuals who were considered to be technology experts and individuals associated with the issue. As a result forty-two percent of the respondents believed that the future of online interactions would remain the same while thirty-nine percent believed that the negative interactions would continue to fester and make way in our societies. The remaining nineteen percent believed that the amount of online harassment and distrust would go down in the coming years as compared to how it is today.

The survey resulted in four different possible conclusions one of which stated that the online atmosphere will remain more on the negative side due to the fact that trolling and the like attract attention to websites and makes the views go up. This applies not only to straightforward trolling ,but also to other types of uncivil disclosure such as misinformation. Rising issues involving negative experiences on the internet leads to the question of whether or not people should be allowed absolute freedom of speech on the internet. Already the public has recognised the influence that some governments have over tech companies regarding their censoring and their privacy. Being able to express one’s opinion freely and post information is a part of the basic right of freedom of speech. Restricting any aspect of the internet or a person's rights in America would definitely cause an uproar in the community. Simply restricting one aspect of the internet and not touching another part of it would bring up many complications for not only the public ,but also the government and tech industries. As it stands now, the monitoring of each website’s content is ,right now, entirely on the shoulders of the tech companies. As it is right now the spread of negative atmospheres online is not predicted to decrease ,but over time perhaps there will be a more effective method of protecting internet users for harassment and misinformation as a whole. Facebook’s New AI assistant Last December Facebook began testing a new feature to its messaging system called M. This new feature is an Artificial Intelligence messenger assistant which analyzes an individual's conversation while searching for keywords to trigger M’s suggestive capabilities. There are few restrictions as to what M can help you do on your behalf including; making payment requests, sending stickers, making polls, and using transportation apps such as Uber or Lyft. The first run for America will be within this next year for the few who will be invited to be beta testers for the iOS and Android application of M. M’s suggestions are its accumulated experiences that have been built up from analyzing a great multitude of conversations and requests over the time of about eighteen months making it a powerful device in predictive capabilities. Along with being able to utilize M it is, of course, necessary for the user to fully accept the fact that their Facebook conversations are no longer their own; they are already possessed by Facebook. In this day and age people debate over the idea of their own privacy on the web and many of said people find it overbearing ,especially in recent years. In an age where a good amount of our social interactions take place over the web, it is time to think about who really owns the exchange of conversation one may have with another presence online. M is just one of many steps towards technology companies trying to give users a more convenient experience at the cost of the individual's privacy.

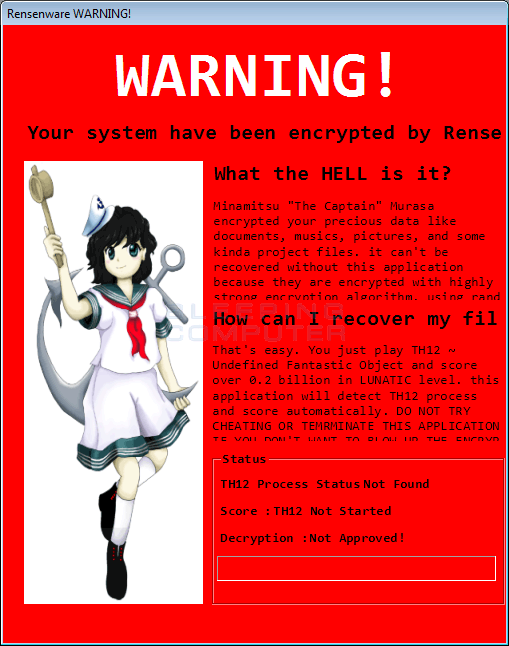

On one end, M could prove to heighten the experience of Facebook users by a lot. On the other side, it could make many users uncomfortable with M’s abilities. When downloading the Facebook Messenger app, the user must first agree to its terms of use which is a long and convoluted document stating that the app has access to use the individual's phone mic for conversations and to the camera for sending photos. For M the terms or use would be very similar ,but would expand into much more of the users personal apps such as Uber or one’s banking app. For someone who does not want to pry too deeply into the semantics of app permissions, it can seem very intimidating to the user. Having M as an artificial intelligence assistant does sound very exciting and helpful, but for those who are skeptic of having their conversations analyzed by Facebook then the user can mute the bot through the settings though this still does not promise the security of your conversations privacy. The Anime Ransomeware It goes to say that most all people who use computers know of or have had experience with external threats such as malware and viruses ,but now a different type of malicious software that people may not know about is suddenly becoming more apparent lately. There is a type of software called Ransomware which infects computers like a malware, but in this case the software encrypts the user's files and forces them to pay a sum of money to regain access to their locked files. Rensenware is a new kind of ransomware developed by an individual who calls himself Tvple Reaser. Instead of making the infected user pay money to access their files, Rensenware requires them to reach a high score of two hundred million points in an anime bullet hell shooter game called TH12- Undefined Fantastic Object on Lunatic level. The creator has apologized for the creation of this ransomware as he had actually only intended it to be a joke between friends and fans of the game. Since then he has replaced the ransomware with a safe version which isn’t able to lock one’s files by force, but with the original code now floating around on the web it is hard to say whether or not the ransomware could become much more malicious. In the end it shows how important it is to be conscious of what one puts onto the internet. Even if the creator had produced this software without actual ill-intent and even tried to mend the issue, the accessibility of the code itself was made accessible to many people who have the potential to cause real damage to people's computers. Alongside the knowledge of how to create software it is necessary that the individual also thinks about the actual potential of the product before putting it to use in real life. It just goes to show; THINK BEFORE YOU ACT!

Robotic RightsLet’s face it, we have all asked the question, what really makes up alive? Philosophers, scientists, and religious leaders all have their own distinct answer the question,but are any of them truly right or wrong? Well, that question cannot be answered yet because much like any theory , most of our beliefs lack hard evidence. Even now neuroscientists are poking at the secrets of the human consciousness and what makes us sentient. As it stands now we struggle at teaching machines the difference between right and wrong. These computers who strive for some sort of moral compass are described as our modern day artificial intelligence. We can teach these systems the difference between different options by using a type of reinforcement learning where good performance is emphasized by virtual reward. Though the technology is still underdeveloped, the algorithms are becoming more and more life-like everyday.

Our AI robotics still lack the ability to feel or emphasize like we humans do, but what will happen when we evolve these machines to be able to perceive, feel, and be able to act all on their own? It is easy to say that a robot has no moral value because they are only programmed to feel and that they are made from metal and not flesh or bone, but is that where the real value of life lies? If a being were to act, speak, and feel the same as another human does, some may say that the being is also deserving of rights. Not just in the past, but even now some people in our species do not consider other races of our species to be considered human. Even if another person looks different on the outside they still contain all the same inner workings of feelings, morals, and even anatomy. People often disregard those who are different as being inhuman. These beliefs of inequality is called racism and has led to the torture and oppression behind many people in the human race. The rise of artificial intelligence or even the possibility of finding a new alien race raises the topic of speciesism. Speciesism is defined as “a prejudice or bias in favour of the interests of members of one's own species and against those of members of other species” by Peter Singer in 1975. Even if a being is not considered human do they still deserve basic rights? Currently many people practice and push for animal rights against abuse towards animals. If artificial intelligence were to get to a stage of actual sentience then should basic rights be allowed to them as well? Many people may say no because the entity could be made out of the same thing that their toaster is made out of ,but others may say yes because they simply see another sentient being and not simply a machine. I believe that if a truly sentient artificial intelligence were to surface that the entity should have at leave basic rights to protection and means of living so long as they can actually feel and act on their own. We are a long ways off to being able to create a great artificial intelligence so until there is proof of an object being able to feel on it’s own, I believe that robots and objects do not have the need for basic rights. If an object cannot feel or act freely on its own then there is nothing to protect because it is all programmable. Since the first programmable computer in the 1940’s, myths and rumors of the possibility of Artificial Intelligence has been churning in our society. From sci-fi movies to books, people have been slowly accepting the fact that AI is going to become a reality. Some people look forward to this advancement while others see it as the end of the world as we know it. We have not yet reached that reality ,but as it is today, we humans are on the cusp of solving this controversial code. We already have a type of technology that we dubbed as “AI” ,but what really defines Artificial Intelligence; the ability to produce emotions, to be able to follow a moral compass, or even to be able to physically feel? We do not currently have technology that allows any three of those possibilities ,but they are all current products being researched all over the world. Right now the American Government, specifically the Office of Naval Affairs is offering a $7.5 million over five years in grant money towards the creation of an Artificial Intelligence system which can follow a moral compass and determine right from wrong. Since 2012, the UN established that unmanned machinery is incapable of making lethal decisions or acting in a lethal situation.

The military currently plans on using this technology to be able to use machinery on the battlefield in terms of being able to help injured parties. In order to make this a successful project, the machines must be able to consciously decide if the individual must be evacuated or receive immediate medical attention first. Recently we have been making great strides towards a finished product, but the field of ethics is still trying to play catch up to the possible outcomes of this technology. Many questions arise as to how this technology will be applied in other areas of computer science and if the decisions of these programs are even accurate enough to use for such an important task. Others argue that with the advancements of drones, autonomous vehicles, and missile defines that this project is absolutely necessary for the military to advance technologically. The predictability of these new robots will be essential to their application and how society will view it. Even if this new strain of Artificial Intelligence is able to make conscious decisions between injured and near death, it is very possible for it to backfire on us. If this technology fails when it is needed most then it could lead to the death of many individuals during a crisis. It is nearly impossible to predict every single situation which these robots are possible to encounter. Due to the fact that this cannot be predicted down to the 100th percentile, it leaves a lot of room for unpredicted results and a higher chance of failure. Such discrepancies in actions could cost one , if not many, their lives. In the end one should really question whether or not they are comfortable allowing machinery to make lethal decisions for one’s self and others. Tracking Your Health Down The Rabbit Hole? The latest lifestyle trend in California is physical health. No not just measuring how healthy you feel or look ,but by actually counting every step, calorie, gram of fat, sodium, how much water you drink, and one’s heart rate. For many people, details of their physical health is their obsession. Media glorifies the use of special apps and wearable devices, such as the Fitbit, UP, or FuelBand from Nike in order to keep record of these details and make them feel like they have better control over the exchange of energy within the body. Keeping oneself healthy is key to improving one’s lifespan and is also a good lifestyle choice to make for keeping one’s mental health at its peak as well, but how useful are these wearable health devices for actually losing weight or living healthier? Studies have shown us that wearing a Fitbit ,in fact, does not usually promote weight loss or making healthier choices (Urist, 2016). Actually, people have been recorded to not choose healthier options like taking the stairs or eating salads opposed to making unhealthy choices. Due to the fact that they can see how many calories they burned from walking from their work to their car, individuals tend to assume that it means that they can allow for more unhealthy habits which tends to cause weight gain. Though American’s can access these details about their lives, many are unsure how to use that information. Since this is true, one may ask if there is really any point to using these devices. Some individuals actually use a Fitbit to record health details to help with medical conditions, such as diabetes. Usually people who actually do need to use this wearable technology for health purposes are educated by their doctors or healthcare professionals to know what this information means and how to react to this information. Whether or not a person is using a Fitbit or FuelBand for medical purposes or not, one must pose the question as to how correct the information it gathers is and how this information can be used legally. In Canada, there is currently a court case where a personal trainer intends to sue for personal injury and use the data from her Fitbit as evidence in the case. It is incredibly easy to manipulate the data recorded from a Fitbit and each person’s vital signs are as unique as a snowflake so it is difficult to really say how viable Fitbit data will be in court. Plus because the technology is only at the wearable level and not actually recording this data from within the body, it’s hard to say how accurate or reliable the data from this technology is. Regardless of whether one wishes to use their Fitbit data in court or not, the reliability of this technology could still leave room to be desired in the end. Most aspects of these athletic devices are just glorified pedometers in the end. Urist, J. (2016, May 17). Fitness band frustration: Experience weight gain with trackers. Retrieved February 15, 2017, from http://www.today.com/health/fitness-band-frustration-users-complain-weight-gain-trackers-t66146 |

Author

|

RSS Feed

RSS Feed